Research in Lifespan Development

What you’ll learn to do: examine how to do research in lifespan development

How do we know what changes and stays the same (and when and why) in lifespan development? We rely on research that utilizes the scientific method so that we can have confidence in the findings. How data are collected may vary by age group and by the type of information sought. The developmental design (for example, following individuals as they age over time or comparing individuals of different ages at one point in time) will affect the data and the conclusions that can be drawn from them about actual age changes. What do you think are the particular challenges or issues in conducting developmental research, such as with infants and children? Read on to learn more.

Learning Outcomes

- Explain how the scientific method is used in researching development

- Compare various types and objectives of developmental research

- Describe methods for collecting research data (including observation, survey, case study, content analysis, and secondary content analysis)

- Explain correlational research

- Describe the value of experimental research

- Compare advantages and disadvantages of developmental research designs (cross-sectional, longitudinal, and sequential)

- Describe challenges associated with conducting research in lifespan development

Research in Lifespan Development

How do we know what we know?

An important part of learning any science is having a basic knowledge of the techniques used in gathering information. The hallmark of scientific investigation is that of following a set of procedures designed to keep questioning or skepticism alive while describing, explaining, or testing any phenomenon. Not long ago a friend said to me that he did not trust academicians or researchers because they always seem to change their story. That, however, is exactly what science is all about; it involves continuously renewing our understanding of the subjects in question and an ongoing investigation of how and why events occur. Science is a vehicle for going on a never-ending journey. In the area of development, we have seen changes in recommendations for nutrition, in explanations of psychological states as people age, and in parenting advice. So think of learning about human development as a lifelong endeavor.

Personal Knowledge

How do we know what we know? Take a moment to write down two things that you know about childhood. Okay. Now, how do you know? Chances are you know these things based on your own history (experiential reality), what others have told you, or cultural ideas (agreement reality) (Seccombe and Warner, 2004). There are several problems with personal inquiry, or drawing conclusions based on our personal experiences.

Read the following sentence aloud:

Paris in the

the spring

Are you sure that is what it said? Read it again:

Paris in the

the spring

If you read it differently the second time (adding the second “the”) you just experienced one of the problems with relying on personal inquiry; that is, the tendency to see what we believe. Our assumptions very often guide our perceptions, consequently, when we believe something, we tend to see it even if it is not there. Have you heard the saying, “seeing is believing”? Well, the truth is just the opposite: believing is seeing. This problem may just be a result of cognitive ‘blinders’ or it may be part of a more conscious attempt to support our own views. Confirmation bias is the tendency to look for evidence that we are right and in so doing, we ignore contradictory evidence.

Philosopher Karl Popper suggested that the distinction between that which is scientific and that which is unscientific is that science is falsifiable; scientific inquiry involves attempts to reject or refute a theory or set of assumptions (Thornton, 2005). A theory that cannot be falsified is not scientific. And much of what we do in personal inquiry involves drawing conclusions based on what we have personally experienced or validating our own experience by discussing what we think is true with others who share the same views.

Science offers a more systematic way to make comparisons and guard against bias. One technique used to avoid sampling bias is to select participants for a study in a random way. This means using a technique to ensure that all members have an equal chance of being selected. Simple random sampling may involve using a set of random numbers as a guide in determining who is to be selected. For example, if we have a list of 400 people and wish to randomly select a smaller group or sample to be studied, we use a list of random numbers and select the case that corresponds with that number (Case 39, 3, 217, etc.). This is preferable to asking only those individuals with whom we are familiar to participate in a study; if we conveniently chose only people we know, we know nothing about those who had no opportunity to be selected. There are many more elaborate techniques that can be used to obtain samples that represent the composition of the population we are studying. But even though a randomly selected representative sample is preferable, it is not always used because of costs and other limitations. As a consumer of research, however, you should know how the sample was obtained and keep this in mind when interpreting results. It is possible that what was found was limited to that sample or similar individuals and not generalizable to everyone else.

Scientific Methods

The particular method used to conduct research may vary by discipline and since lifespan development is multidisciplinary, more than one method may be used to study human development. One method of scientific investigation involves the following steps:

- Determining a research question

- Reviewing previous studies addressing the topic in question (known as a literature review)

- Determining a method of gathering information

- Conducting the study

- Interpreting the results

- Drawing conclusions; stating limitations of the study and suggestions for future research

- Making the findings available to others (both to share information and to have the work scrutinized by others)

The findings of these scientific studies can then be used by others as they explore the area of interest. Through this process, a literature or knowledge base is established. This model of scientific investigation presents research as a linear process guided by a specific research question. And it typically involves quantitative research, which relies on numerical data or using statistics to understand and report what has been studied.

Another model of research, referred to as qualitative research, may involve steps such as these:

- Begin with a broad area of interest and a research question

- Gain entrance into a group to be researched

- Gather field notes about the setting, the people, the structure, the activities or other areas of interest

- Ask open-ended, broad “grand tour” types of questions when interviewing subjects

- Modify research questions as the study continues

- Note patterns or consistencies

- Explore new areas deemed important by the people being observed

- Report findings

In this type of research, theoretical ideas are “grounded” in the experiences of the participants. The researcher is the student and the people in the setting are the teachers as they inform the researcher of their world (Glazer & Strauss, 1967). Researchers should be aware of their own biases and assumptions, acknowledge them and bracket them in efforts to keep them from limiting accuracy in reporting. Sometimes qualitative studies are used initially to explore a topic and more quantitative studies are used to test or explain what was first described.

A good way to become more familiar with these scientific research methods, both quantitative and qualitative, is to look at journal articles, which are written in sections that follow these steps in the scientific process. Most psychological articles and many papers in the social sciences follow the writing guidelines and format dictated by the American Psychological Association (APA)(https://owl.english.purdue.edu/owl/section/2/10/). In general, the structure follows: abstract (summary of the article), introduction or literature review, methods explaining how the study was conducted, results of the study, discussion and interpretation of findings, and references.

Link to Learning

Brené Brown is a bestselling author and social work professor at the University of Houston. She conducts grounded theory research by collecting qualitative data from large numbers of participants. In Brené Brown’s TED Talk The Power of Vulnerability,

(https://www.youtube.com/watch?time_continue=177&v=iCvmsMzlF7o) Brown refers to herself as a storyteller-researcher as she explains her research process and summarizes her results.

Try It

Research Methods and Objectives

The main categories of psychological research are descriptive, correlational, and experimental research. Research studies that do not test specific relationships between variables are called descriptive, or qualitative, studies. These studies are used to describe general or specific behaviors and attributes that are observed and measured. In the early stages of research it might be difficult to form a hypothesis, especially when there is not any existing literature in the area. In these situations designing an experiment would be premature, as the question of interest is not yet clearly defined as a hypothesis. Often a researcher will begin with a non-experimental approach, such as a descriptive study, to gather more information about the topic before designing an experiment or correlational study to address a specific hypothesis. Some examples of descriptive questions include:

- “How much time do parents spend with children?”

- “How many times per week do couples have intercourse?”

- “When is marital satisfaction greatest?”

The main types of descriptive studies include observation, case studies, surveys, and content analysis (which we’ll examine further in the module). Descriptive research is distinct from correlational research, in which psychologists formally test whether a relationship exists between two or more variables. Experimental research goes a step further beyond descriptive and correlational research and randomly assigns people to different conditions, using hypothesis testing to make inferences about how these conditions affect behavior. Some experimental research includes explanatory studies, which are efforts to answer the question “why” such as:

- “Why have rates of divorce leveled off?”

- “Why are teen pregnancy rates down?”

- “Why has the average life expectancy increased?”

Evaluation research is designed to assess the effectiveness of policies or programs. For instance, research might be designed to study the effectiveness of safety programs implemented in schools for installing car seats or fitting bicycle helmets. Do children who have been exposed to the safety programs wear their helmets? Do parents use car seats properly? If not, why not?

Watch It

This Crash Course video provides a brief overview of psychological research, which we’ll cover in more detail on the coming pages.

Try It

Research Methods

We have just learned about some of the various models and objectives of research in lifespan development. Now we’ll dig deeper to understand the methods and techniques used to describe, explain, or evaluate behavior.

All types of research methods have unique strengths and weaknesses, and each method may only be appropriate for certain types of research questions. For example, studies that rely primarily on observation produce incredible amounts of information, but the ability to apply this information to the larger population is somewhat limited because of small sample sizes. Survey research, on the other hand, allows researchers to easily collect data from relatively large samples. While this allows for results to be generalized to the larger population more easily, the information that can be collected on any given survey is somewhat limited and subject to problems associated with any type of self-reported data. Some researchers conduct archival research by using existing records. While this can be a fairly inexpensive way to collect data that can provide insight into a number of research questions, researchers using this approach have no control on how or what kind of data was collected.

Types of Descriptive Research

Observation

Observational studies, also called naturalistic observation, involve watching and recording the actions of participants. This may take place in the natural setting, such as observing children at play in a park, or behind a one-way glass while children are at play in a laboratory playroom. The researcher may follow a checklist and record the frequency and duration of events (perhaps how many conflicts occur among 2-year-olds) or may observe and record as much as possible about an event as a participant (such as attending an Alcoholics Anonymous meeting and recording the slogans on the walls, the structure of the meeting, the expressions commonly used, etc.). The researcher may be a participant or a non-participant. What would be the strengths of being a participant? What would be the weaknesses?

In general, observational studies have the strength of allowing the researcher to see how people behave rather than relying on self-report. One weakness of self-report studies is that what people do and what they say they do are often very different. A major weakness of observational studies is that they do not allow the researcher to explain causal relationships. Yet, observational studies are useful and widely used when studying children. It is important to remember that most people tend to change their behavior when they know they are being watched (known as the Hawthorne effect) and children may not survey well.

Case Studies

Case studies involve exploring a single case or situation in great detail. Information may be gathered with the use of observation, interviews, testing, or other methods to uncover as much as possible about a person or situation. Case studies are helpful when investigating unusual situations such as brain trauma or children reared in isolation. And they are often used by clinicians who conduct case studies as part of their normal practice when gathering information about a client or patient coming in for treatment. Case studies can be used to explore areas about which little is known and can provide rich detail about situations or conditions. However, the findings from case studies cannot be generalized or applied to larger populations; this is because cases are not randomly selected and no control group is used for comparison. (Read The Man Who Mistook His Wife for a Hat by Dr. Oliver Sacks as a good example of the case study approach.)

Surveys

Surveys are familiar to most people because they are so widely used. Surveys enhance accessibility to subjects because they can be conducted in person, over the phone, through the mail, or online. A survey involves asking a standard set of questions to a group of subjects. In a highly structured survey, subjects are forced to choose from a response set such as “strongly disagree, disagree, undecided, agree, strongly agree”; or “0, 1-5, 6-10, etc.” Surveys are commonly used by sociologists, marketing researchers, political scientists, therapists, and others to gather information on many variables in a relatively short period of time. Surveys typically yield surface information on a wide variety of factors, but may not allow for an in-depth understanding of human behavior.

Of course, surveys can be designed in a number of ways. They may include forced-choice questions and semi-structured questions in which the researcher allows the respondent to describe or give details about certain events. One of the most difficult aspects of designing a good survey is wording questions in an unbiased way and asking the right questions so that respondents can give a clear response rather than choosing “undecided” each time. Knowing that 30% of respondents are undecided is of little use! So a lot of time and effort should be placed on the construction of survey items. One of the benefits of having forced-choice items is that each response is coded so that the results can be quickly entered and analyzed using statistical software. The analysis takes much longer when respondents give lengthy responses that must be analyzed in a different way. Surveys are useful in examining stated values, attitudes, opinions, and reporting on practices. However, they are based on self-report, or what people say they do rather than on observation, and this can limit accuracy. Validity refers to accuracy and reliability refers to consistency in responses to tests and other measures; great care is taken to ensure the validity and reliability of surveys.

Watch It

In this video, Harvard psychologist Dan Gilbert explains survey research that was conducted to explore the way our preferences change over time.

Content Analysis

Content analysis involves looking at media such as old texts, pictures, commercials, lyrics or other materials to explore patterns or themes in culture. An example of content analysis is the classic history of childhood by Aries (1962) called “Centuries of Childhood” or the analysis of television commercials for sexual or violent content or for ageism. Passages in text or television programs can be randomly selected for analysis as well. Again, one advantage of analyzing work such as this is that the researcher does not have to go through the time and expense of finding respondents, but the researcher cannot know how accurately the media reflects the actions and sentiments of the population.

Secondary content analysis, or archival research, involves analyzing information that has already been collected or examining documents or media to uncover attitudes, practices or preferences. There are a number of data sets available to those who wish to conduct this type of research. The researcher conducting secondary analysis does not have to recruit subjects but does need to know the quality of the information collected in the original study. And unfortunately, the researcher is limited to the questions asked and data collected originally.

Link to Learning

U.S. Census Data is available and widely used to look at trends and changes taking place in the United States (visit the United States Census website (http://www.census.gov/) and check it out). There are also a number of other agencies that collect data on family life, sexuality, and on many other areas of interest in human development (go to the NORC at the University of Chicago website (http://www.norc.uchicago.edu/) or the Henry J Kaiser Family Foundation website (http://www.kff.org/) and see what you find).

Try It

Correlational and Experimental Research

Correlational Research

When scientists passively observe and measure phenomena it is called correlational research. Here, researchers do not intervene and change behavior, as they do in experiments. In correlational research, the goal is to identify patterns of relationships, but not cause and effect. Importantly, with correlational research, you can examine only two variables at a time, no more and no less.

So, what if you wanted to test whether spending money on others is related to happiness, but you don’t have $20 to give to each participant in order to have them spend it for your experiment? You could use a correlational design—which is exactly what Professor Elizabeth Dunn (2008) at the University of British Columbia did when she conducted research on spending and happiness. She asked people how much of their income they spent on others or donated to charity, and later she asked them how happy they were. Do you think these two variables were related? Yes, they were! The more money people reported spending on others, the happier they were.

Understanding Correlation

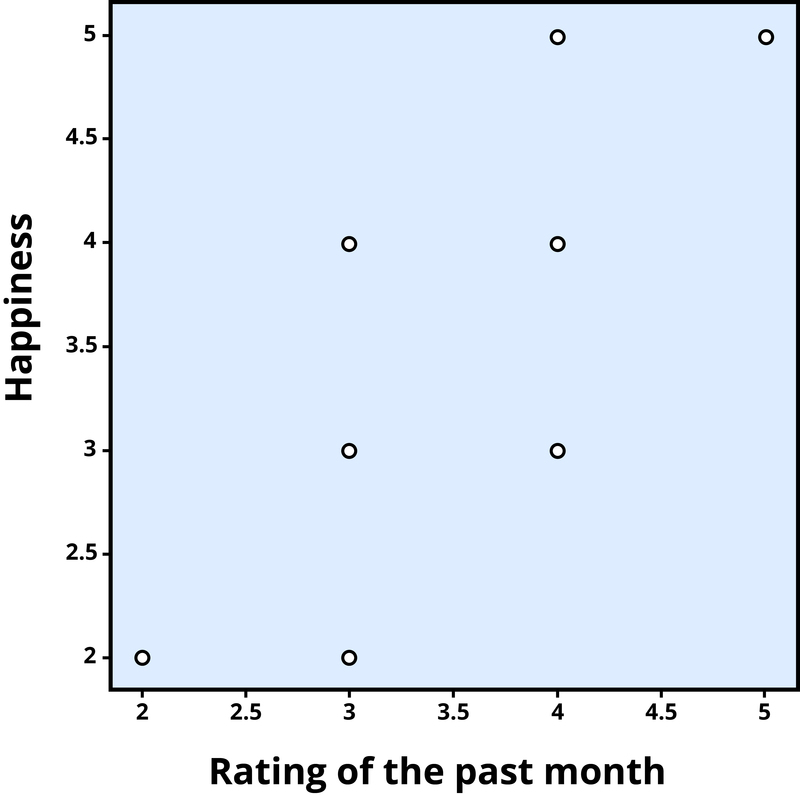

To find out how well two variables correlate, you can plot the relationship between the two scores on what is known as a scatterplot. In the scatterplot, each dot represents a data point. (In this case it’s individuals, but it could be some other unit.) Importantly, each dot provides us with two pieces of information—in this case, information about how good the person rated the past month (x-axis) and how happy the person felt in the past month (y-axis). Which variable is plotted on which axis does not matter.

The association between two variables can be summarized statistically using the correlation coefficient (abbreviated as r). A correlation coefficient provides information about the direction and strength of the association between two variables. For the example above, the direction of the association is positive. This means that people who perceived the past month as being good reported feeling more happy, whereas people who perceived the month as being bad reported feeling less happy.

With a positive correlation, the two variables go up or down together. In a scatterplot, the dots form a pattern that extends from the bottom left to the upper right (just as they do in Figure 3). The r value for a positive correlation is indicated by a positive number (although, the positive sign is usually omitted). Here, the r value is .81.

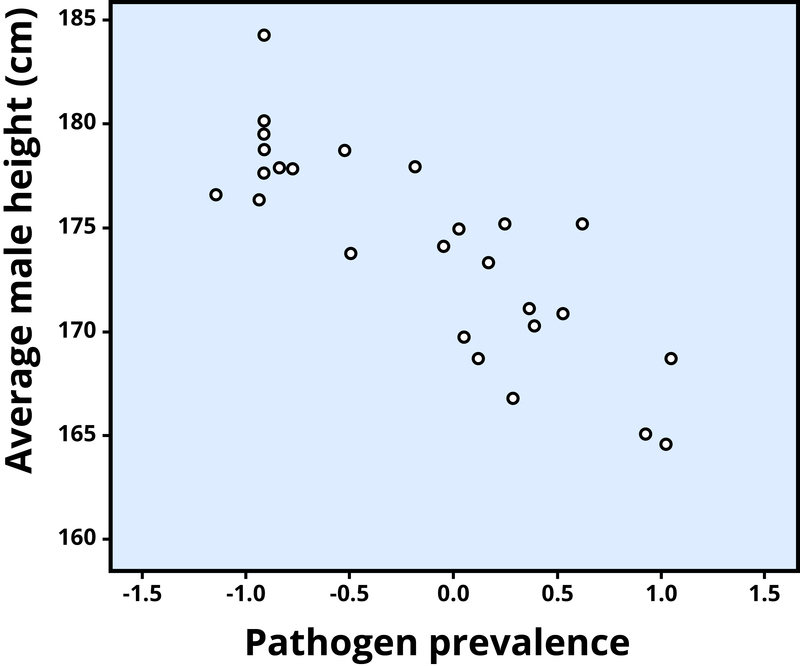

A negative correlation is one in which the two variables move in opposite directions. That is, as one variable goes up, the other goes down. Figure 4 shows the association between the average height of males in a country (y-axis) and the pathogen prevalence (or commonness of disease; x-axis) of that country. In this scatterplot, each dot represents a country. Notice how the dots extend from the top left to the bottom right. What does this mean in real-world terms? It means that people are shorter in parts of the world where there is more disease. The r value for a negative correlation is indicated by a negative number—that is, it has a minus (–) sign in front of it. Here, it is –.83.

The strength of a correlation has to do with how well the two variables align. Recall that in Professor Dunn’s correlational study, spending on others positively correlated with happiness; the more money people reported spending on others, the happier they reported to be. At this point you may be thinking to yourself, I know a very generous person who gave away lots of money to other people but is miserable! Or maybe you know of a very stingy person who is happy as can be. Yes, there might be exceptions. If an association has many exceptions, it is considered a weak correlation. If an association has few or no exceptions, it is considered a strong correlation. A strong correlation is one in which the two variables always, or almost always, go together. In the example of happiness and how good the month has been, the association is strong. The stronger a correlation is, the tighter the dots in the scatterplot will be arranged along a sloped line.

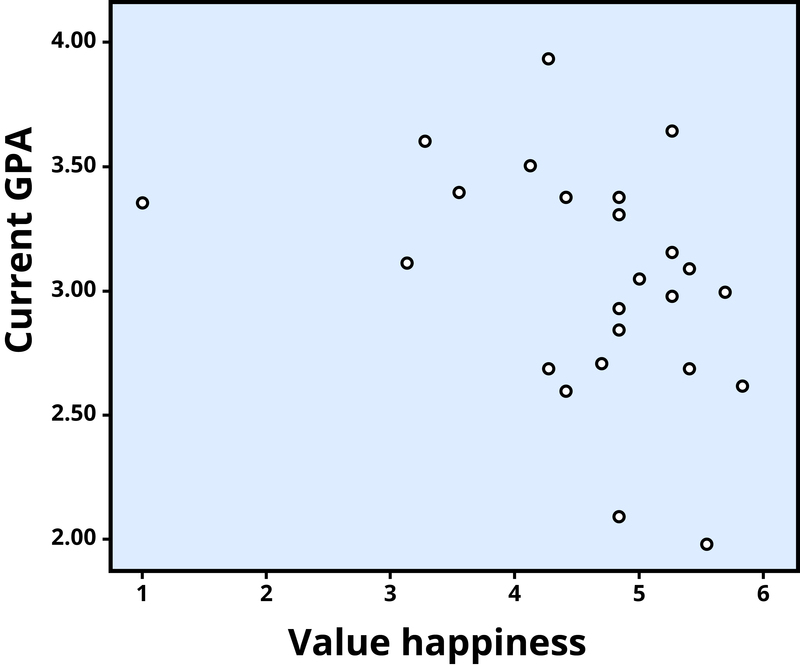

The r value of a strong correlation will have a high absolute value (a perfect correlation has an absolute value of the whole number one, or 1.00). In other words, you disregard whether there is a negative sign in front of the r value, and just consider the size of the numerical value itself. If the absolute value is large, it is a strong correlation. A weak correlation is one in which the two variables correspond some of the time, but not most of the time. Figure 5 shows the relation between valuing happiness and grade point average (GPA). People who valued happiness more tended to earn slightly lower grades, but there were lots of exceptions to this. The r value for a weak correlation will have a low absolute value. If two variables are so weakly related as to be unrelated, we say they are uncorrelated, and the r value will be zero or very close to zero. In the previous example, is the correlation between height and pathogen prevalence strong? Compared to Figure 5, the dots in Figure 4 are tighter and less dispersed. The absolute value of –.83 is large (closer to one than to zero). Therefore, it is a strong negative correlation.

Problems with correlation

If generosity and happiness are positively correlated, should we conclude that being generous causes happiness? Similarly, if height and pathogen prevalence are negatively correlated, should we conclude that disease causes shortness? From a correlation alone, we can’t be certain. For example, in the first case, it may be that happiness causes generosity, or that generosity causes happiness. Or, a third variable might cause both happiness and generosity, creating the illusion of a direct link between the two. For example, wealth could be the third variable that causes both greater happiness and greater generosity. This is why correlation does not mean causation—an often repeated phrase among psychologists.

Watch It

In this video, University of Pennsylvania psychologist and bestselling author, Angela Duckworth describes the correlational research that informed her understanding of grit.

Link to Learning

Click through this interactive presentation to examine actual research studies.

Try It

Experimental Research

Experiments are designed to test hypotheses (or specific statements about the relationship between variables) in a controlled setting in efforts to explain how certain factors or events produce outcomes. A variable is anything that changes in value. Concepts are operationalized or transformed into variables in research which means that the researcher must specify exactly what is going to be measured in the study. For example, if we are interested in studying marital satisfaction, we have to specify what marital satisfaction really means or what we are going to use as an indicator of marital satisfaction. What is something measurable that would indicate some level of marital satisfaction? Would it be the amount of time couples spend together each day? Or eye contact during a discussion about money? Or maybe a subject’s score on a marital satisfaction scale? Each of these is measurable but these may not be equally valid or accurate indicators of marital satisfaction. What do you think? These are the kinds of considerations researchers must make when working through the design.

The experimental method is the only research method that can measure cause and effect relationships between variables. Three conditions must be met in order to establish cause and effect. Experimental designs are useful in meeting these conditions:

- The independent and dependent variables must be related. In other words, when one is altered, the other changes in response. The independent variable is something altered or introduced by the researcher; sometimes thought of as the treatment or intervention. The dependent variable is the outcome or the factor affected by the introduction of the independent variable; the dependent variable depends on the independent variable. For example, if we are looking at the impact of exercise on stress levels, the independent variable would be exercise; the dependent variable would be stress.

- The cause must come before the effect. Experiments measure subjects on the dependent variable before exposing them to the independent variable (establishing a baseline). So we would measure the subjects’ level of stress before introducing exercise and then again after the exercise to see if there has been a change in stress levels. (Observational and survey research does not always allow us to look at the timing of these events which makes understanding causality problematic with these methods.)

- The cause must be isolated. The researcher must ensure that no outside, perhaps unknown variables, are actually causing the effect we see. The experimental design helps make this possible. In an experiment, we would make sure that our subjects’ diets were held constant throughout the exercise program. Otherwise, the diet might really be creating a change in stress level rather than exercise.

A basic experimental design involves beginning with a sample (or subset of a population) and randomly assigning subjects to one of two groups: the experimental group or the control group. Ideally, to prevent bias, the participants would be blind to their condition (not aware of which group they are in) and the researchers would also be blind to each participant’s condition (referred to as “double blind“). The experimental group is the group that is going to be exposed to an independent variable or condition the researcher is introducing as a potential cause of an event. The control group is going to be used for comparison and is going to have the same experience as the experimental group but will not be exposed to the independent variable. This helps address the placebo effect, which is that a group may expect changes to happen just by participating. After exposing the experimental group to the independent variable, the two groups are measured again to see if a change has occurred. If so, we are in a better position to suggest that the independent variable caused the change in the dependent variable. The basic experimental model looks like this:

| Table 1. Variables and Experimental and Control Groups | |||

Sample is randomly assigned to one of the groups below: |

Measure DV |

Introduce IV |

Measure DV |

| Experimental Group | X | X | X |

| Control Group | X | – | X |

The major advantage of the experimental design is that of helping to establish cause and effect relationships. A disadvantage of this design is the difficulty of translating much of what concerns us about human behavior into a laboratory setting.

Link to Learning

Have you ever wondered why people make decisions that seem to be in opposition to their longterm best interest? In Eldar Shafir’s TED Talk Living Under Scarcity (https://www.youtube.com/watch?time_continue=18&v=gV1ESN8NGh8), Shafir describes a series of experiments that shed light on how scarcity (real or perceived) affects our decisions.

Try It

Developmental Research Designs

Now you know about some tools used to conduct research about human development. Remember, research methods are tools that are used to collect information. But it is easy to confuse research methods and research design. Research design is the strategy or blueprint for deciding how to collect and analyze information. Research design dictates which methods are used and how. Developmental research designs are techniques used particularly in lifespan development research. When we are trying to describe development and change, the research designs become especially important because we are interested in what changes and what stays the same with age. These techniques try to examine how age, cohort, gender, and social class impact development.

Cross-sectional designs

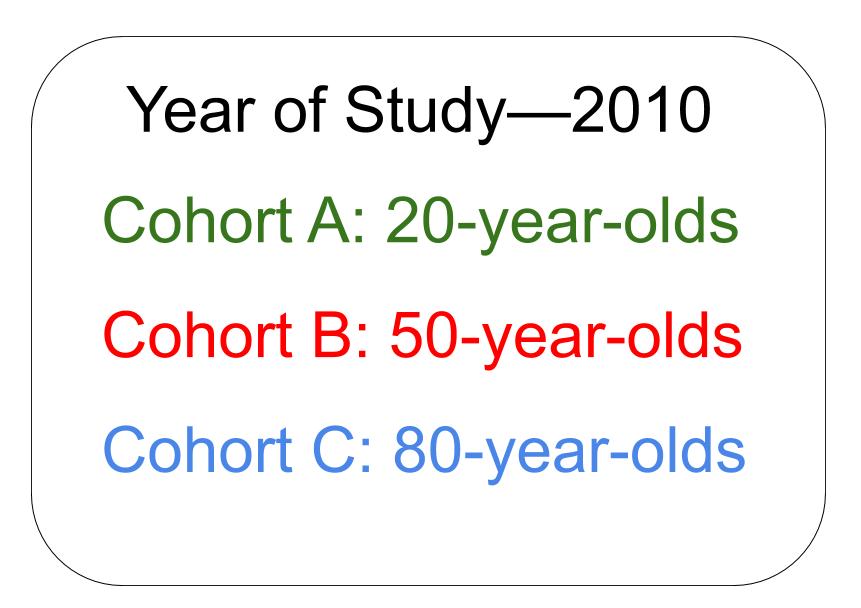

The majority of developmental studies use cross-sectional designs because they are less time-consuming and less expensive than other developmental designs. Cross-sectional research designs are used to examine behavior in participants of different ages who are tested at the same point in time. Let’s suppose that researchers are interested in the relationship between intelligence and aging. They might have a hypothesis (an educated guess, based on theory or observations) that intelligence declines as people get older. The researchers might choose to give a certain intelligence test to individuals who are 20 years old, individuals who are 50 years old, and individuals who are 80 years old at the same time and compare the data from each age group. This research is cross-sectional in design because the researchers plan to examine the intelligence scores of individuals of different ages within the same study at the same time; they are taking a “cross-section” of people at one point in time. Let’s say that the comparisons find that the 80-year-old adults score lower on the intelligence test than the 50-year-old adults, and the 50-year-old adults score lower on the intelligence test than the 20-year-old adults. Based on these data, the researchers might conclude that individuals become less intelligent as they get older. Would that be a valid (accurate) interpretation of the results?

No, that would not be a valid conclusion because the researchers did not follow individuals as they aged from 20 to 50 to 80 years old. One of the primary limitations of cross-sectional research is that the results yield information about age differences not necessarily changes with age or over time. That is, although the study described above can show that in 2010, the 80-year-olds scored lower on the intelligence test than the 50-year-olds, and the 50-year-olds scored lower on the intelligence test than the 20-year-olds, the data used to come up with this conclusion were collected from different individuals (or groups of individuals). It could be, for instance, that when these 20-year-olds get older (50 and eventually 80), they will still score just as high on the intelligence test as they did at age 20. In a similar way, maybe the 80-year-olds would have scored relatively low on the intelligence test even at ages 50 and 20; the researchers don’t know for certain because they did not follow the same individuals as they got older.

It is also possible that the differences found between the age groups are not due to age, per se, but due to cohort effects. The 80-year-olds in this 2010 research grew up during a particular time and experienced certain events as a group. They were born in 1930 and are part of the Traditional or Silent Generation. The 50-year-olds were born in 1960 and are members of the Baby Boomer cohort. The 20-year-olds were born in 1990 and are part of the Millennial or Gen Y Generation. What kinds of things did each of these cohorts experience that the others did not experience or at least not in the same ways?

You may have come up with many differences between these cohorts’ experiences, such as living through certain wars, political and social movements, economic conditions, advances in technology, changes in health and nutrition standards, etc. There may be particular cohort differences that could especially influence their performance on intelligence tests, such as education level and use of computers. That is, many of those born in 1930 probably did not complete high school; those born in 1960 may have high school degrees, on average, but the majority did not attain college degrees; the young adults are probably current college students. And this is not even considering additional factors such as gender, race, or socioeconomic status. The young adults are used to taking tests on computers, but the members of the other two cohorts did not grow up with computers and may not be as comfortable if the intelligence test is administered on computers. These factors could have been a factor in the research results.

Another disadvantage of cross-sectional research is that it is limited to one time of measurement. Data are collected at one point in time and it’s possible that something could have happened in that year in history that affected all of the participants, although possibly each cohort may have been affected differently. Just think about the mindsets of participants in research that was conducted in the United States right after the terrorist attacks on September 11, 2001.

Longitudinal research designs

Longitudinal research involves beginning with a group of people who may be of the same age and background (cohort) and measuring them repeatedly over a long period of time. One of the benefits of this type of research is that people can be followed through time and be compared with themselves when they were younger; therefore changes with age over time are measured. What would be the advantages and disadvantages of longitudinal research? Problems with this type of research include being expensive, taking a long time, and subjects dropping out over time. Think about the film, 63 Up, part of the Up Series mentioned earlier, which is an example of following individuals over time. In the videos, filmed every seven years, you see how people change physically, emotionally, and socially through time; and some remain the same in certain ways, too. But many of the participants really disliked being part of the project and repeatedly threatened to quit; one disappeared for several years; another died before her 63rd year. Would you want to be interviewed every seven years? Would you want to have it made public for all to watch?

Longitudinal research designs are used to examine behavior in the same individuals over time. For instance, with our example of studying intelligence and aging, a researcher might conduct a longitudinal study to examine whether 20-year-olds become less intelligent with age over time. To this end, a researcher might give an intelligence test to individuals when they are 20 years old, again when they are 50 years old, and then again when they are 80 years old. This study is longitudinal in nature because the researcher plans to study the same individuals as they age. Based on these data, the pattern of intelligence and age might look different than from the cross-sectional research; it might be found that participants’ intelligence scores are higher at age 50 than at age 20 and then remain stable or decline a little by age 80. How can that be when cross-sectional research revealed declines in intelligence with age?

Since longitudinal research happens over a period of time (which could be short term, as in months, but is often longer, as in years), there is a risk of attrition. Attrition occurs when participants fail to complete all portions of a study. Participants may move, change their phone numbers, die, or simply become disinterested in participating over time. Researchers should account for the possibility of attrition by enrolling a larger sample into their study initially, as some participants will likely drop out over time. There is also something known as selective attrition—this means that certain groups of individuals may tend to drop out. It is often the least healthy, least educated, and lower socioeconomic participants who tend to drop out over time. That means that the remaining participants may no longer be representative of the whole population, as they are, in general, healthier, better educated, and have more money. This could be a factor in why our hypothetical research found a more optimistic picture of intelligence and aging as the years went by. What can researchers do about selective attrition? At each time of testing, they could randomly recruit more participants from the same cohort as the original members, to replace those who have dropped out.

The results from longitudinal studies may also be impacted by repeated assessments. Consider how well you would do on a math test if you were given the exact same exam every day for a week. Your performance would likely improve over time, not necessarily because you developed better math abilities, but because you were continuously practicing the same math problems. This phenomenon is known as a practice effect. Practice effects occur when participants become better at a task over time because they have done it again and again (not due to natural psychological development). So our participants may have become familiar with the intelligence test each time (and with the computerized testing administration).

Another limitation of longitudinal research is that the data are limited to only one cohort. As an example, think about how comfortable the participants in the 2010 cohort of 20-year-olds are with computers. Since only one cohort is being studied, there is no way to know if findings would be different from other cohorts. In addition, changes that are found as individuals age over time could be due to age or to time of measurement effects. That is, the participants are tested at different periods in history, so the variables of age and time of measurement could be confounded (mixed up). For example, what if there is a major shift in workplace training and education between 2020 and 2040 and many of the participants experience a lot more formal education in adulthood, which positively impacts their intelligence scores in 2040? Researchers wouldn’t know if the intelligence scores increased due to growing older or due to a more educated workforce over time between measurements.

Sequential research designs

Sequential research designs include elements of both longitudinal and cross-sectional research designs. Similar to longitudinal designs, sequential research features participants who are followed over time; similar to cross-sectional designs, sequential research includes participants of different ages. This research design is also distinct from those that have been discussed previously in that individuals of different ages are enrolled into a study at various points in time to examine age-related changes, development within the same individuals as they age, and to account for the possibility of cohort and/or time of measurement effects. In 1965, K. Warner Schaie[1] (a leading theorist and researcher on intelligence and aging), described particular sequential designs: cross-sequential, cohort sequential, and time-sequential. The differences between them depended on which variables were focused on for analyses of the data (data could be viewed in terms of multiple cross-sectional designs or multiple longitudinal designs or multiple cohort designs). Ideally, by comparing results from the different types of analyses, the effects of age, cohort, and time in history could be separated out.

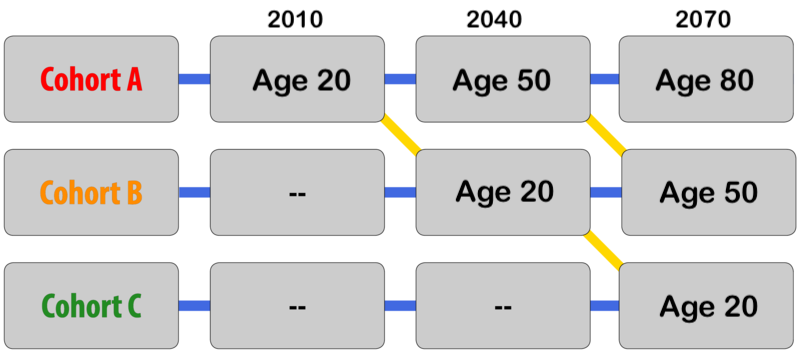

Consider, once again, our example of intelligence and aging. In a study with a sequential design, a researcher might recruit three separate groups of participants (Groups A, B, and C). Group A would be recruited when they are 20 years old in 2010 and would be tested again when they are 50 and 80 years old in 2040 and 2070, respectively (similar in design to the longitudinal study described previously). Group B would be recruited when they are 20 years old in 2040 and would be tested again when they are 50 years old in 2070. Group C would be recruited when they are 20 years old in 2070 and so on.

Studies with sequential designs are powerful because they allow for both longitudinal and cross-sectional comparisons—changes and/or stability with age over time can be measured and compared with differences between age and cohort groups. This research design also allows for the examination of cohort and time of measurement effects. For example, the researcher could examine the intelligence scores of 20-year-olds in different times in history and different cohorts (follow the yellow diagonal lines in figure 3). This might be examined by researchers who are interested in sociocultural and historical changes (because we know that lifespan development is multidisciplinary). One way of looking at the usefulness of the various developmental research designs was described by Schaie and Baltes (1975)[2]: cross-sectional and longitudinal designs might reveal change patterns while sequential designs might identify developmental origins for the observed change patterns.

Since they include elements of longitudinal and cross-sectional designs, sequential research has many of the same strengths and limitations as these other approaches. For example, sequential work may require less time and effort than longitudinal research (if data are collected more frequently than over the 30-year spans in our example) but more time and effort than cross-sectional research. Although practice effects may be an issue if participants are asked to complete the same tasks or assessments over time, attrition may be less problematic than what is commonly experienced in longitudinal research since participants may not have to remain involved in the study for such a long period of time.

When considering the best research design to use in their research, scientists think about their main research question and the best way to come up with an answer. A table of advantages and disadvantages for each of the described research designs is provided here to help you as you consider what sorts of studies would be best conducted using each of these different approaches.

| Table 2. Advantages and disadvantages of different research designs | ||

| Advantages | Disadvantages | |

| Cross-Sectional |

|

|

| Longitudinal |

|

|

| Sequential |

|

|

Try It

Challenges Conducting Developmental Research

Challenges Associated with Conducting Developmental Research

The previous sections describe research tools to assess development across the lifespan, as well as the ways that research designs can be used to track age-related changes and development over time. Before you begin conducting developmental research, however, you must also be aware that testing individuals of certain ages (such as infants and children) or making comparisons across ages (such as children compared to teens) comes with its own unique set of challenges. In the final section of this module, let’s look at some of the main issues that are encountered when conducting developmental research, namely ethical concerns, recruitment issues, and participant attrition.

Ethical Concerns

As a student of the social sciences, you may already know that Institutional Review Boards (IRBs) must review and approve all research projects that are conducted at universities, hospitals, and other institutions (each broad discipline or field, such as psychology or social work, often has its own code of ethics that must also be followed, regardless of institutional affiliation). An IRB is typically a panel of experts who read and evaluate proposals for research. IRB members want to ensure that the proposed research will be carried out ethically and that the potential benefits of the research outweigh the risks and potential harm (psychological as well as physical harm) for participants.

What you may not know though, is that the IRB considers some groups of participants to be more vulnerable or at-risk than others. Whereas university students are generally not viewed as vulnerable or at-risk, infants and young children commonly fall into this category. What makes infants and young children more vulnerable during research than young adults? One reason infants and young children are perceived as being at increased risk is due to their limited cognitive capabilities, which makes them unable to state their willingness to participate in research or tell researchers when they would like to drop out of a study. For these reasons, infants and young children require special accommodations as they participate in the research process. Similar issues and accommodations would apply to adults who are deemed to be of limited cognitive capabilities.

When thinking about special accommodations in developmental research, consider the informed consent process. If you have ever participated in scientific research, you may know through your own experience that adults commonly sign an informed consent statement (a contract stating that they agree to participate in research) after learning about a study. As part of this process, participants are informed of the procedures to be used in the research, along with any expected risks or benefits. Infants and young children cannot verbally indicate their willingness to participate, much less understand the balance of potential risks and benefits. As such, researchers are oftentimes required to obtain written informed consent from the parent or legal guardian of the child participant, an adult who is almost always present as the study is conducted. In fact, children are not asked to indicate whether they would like to be involved in a study at all (a process known as assent) until they are approximately seven years old. Because infants and young children cannot easily indicate if they would like to discontinue their participation in a study, researchers must be sensitive to changes in the state of the participant (determining whether a child is too tired or upset to continue) as well as to parent desires (in some cases, parents might want to discontinue their involvement in the research). As in adult studies, researchers must always strive to protect the rights and well-being of the minor participants and their parents when conducting developmental research.

Watch It

This video from the US Department of Health and Human Services provides an overview of the Institutional Review Board process.

Recruitment

An additional challenge in developmental science is participant recruitment. Recruiting university students to participate in adult studies is typically easy. Many colleges and universities offer extra credit for participation in research and have locations such as bulletin boards and school newspapers where research can be advertised. Unfortunately, young children cannot be recruited by making announcements in Introduction to Psychology courses, by posting ads on campuses, or through online platforms such as Amazon Mechanical Turk. Given these limitations, how do researchers go about finding infants and young children to be in their studies?

The answer to this question varies along multiple dimensions. Researchers must consider the number of participants they need and the financial resources available to them, among other things. Location may also be an important consideration. Researchers who need large numbers of infants and children may attempt to recruit them by obtaining infant birth records from the state, county, or province in which they reside. Some areas make this information publicly available for free, whereas birth records must be purchased in other areas (and in some locations birth records may be entirely unavailable as a recruitment tool). If birth records are available, researchers can use the obtained information to call families by phone or mail them letters describing possible research opportunities. All is not lost if this recruitment strategy is unavailable, however. Researchers can choose to pay a recruitment agency to contact and recruit families for them. Although these methods tend to be quick and effective, they can also be quite expensive. More economical recruitment options include posting advertisements and fliers in locations frequented by families, such as mommy-and-me classes, local malls, and preschools or daycare centers. Researchers can also utilize online social media outlets like Facebook, which allows users to post recruitment advertisements for a small fee. Of course, each of these different recruitment techniques requires IRB approval. And if children are recruited and/or tested in school settings, permission would need to be obtained ahead of time from teachers, schools, and school districts (as well as informed consent from parents or guardians).

And what about the recruitment of adults? While it is easy to recruit young college students to participate in research, some would argue that it is too easy and that college students are samples of convenience. They are not randomly selected from the wider population, and they may not represent all young adults in our society (this was particularly true in the past with certain cohorts, as college students tended to be mainly white males of high socioeconomic status). In fact, in the early research on aging, this type of convenience sample was compared with another type of convenience sample—young college students tended to be compared with residents of nursing homes! Fortunately, it didn’t take long for researchers to realize that older adults in nursing homes are not representative of the older population; they tend to be the oldest and sickest (physically and/or psychologically). Those initial studies probably painted an overly negative view of aging, as young adults in college were being compared to older adults who were not healthy, had not been in school nor taken tests in many decades, and probably did not graduate high school, let alone college. As we can see, recruitment and random sampling can be significant issues in research with adults, as well as infants and children. For instance, how and where would you recruit middle-aged adults to participate in your research?

Attrition

Another important consideration when conducting research with infants and young children is attrition. Although attrition is quite common in longitudinal research in particular (see the previous section on longitudinal designs for an example of high attrition rates and selective attrition in lifespan developmental research), it is also problematic in developmental science more generally, as studies with infants and young children tend to have higher attrition rates than studies with adults. For example, high attrition rates in ERP (event-related potential, which is a technique to understand brain function) studies oftentimes result from the demands of the task: infants are required to sit still and have a tight, wet cap placed on their heads before watching still photographs on a computer screen in a dark, quiet room. In other cases, attrition may be due to motivation (or a lack thereof). Whereas adults may be motivated to participate in research in order to receive money or extra course credit, infants and young children are not as easily enticed. In addition, infants and young children are more likely to tire easily, become fussy, and lose interest in the study procedures than are adults. For these reasons, research studies should be designed to be as short as possible – it is likely better to break up a large study into multiple short sessions rather than cram all of the tasks into one long visit to the lab. Researchers should also allow time for breaks in their study protocols so that infants can rest or have snacks as needed. Happy, comfortable participants provide the best data.

Conclusions

Lifespan development is a fascinating field of study – but care must be taken to ensure that researchers use appropriate methods to examine human behavior, use the correct experimental design to answer their questions, and be aware of the special challenges that are part-and-parcel of developmental research. After reading this module, you should have a solid understanding of these various issues and be ready to think more critically about research questions that interest you. For example, what types of questions do you have about lifespan development? What types of research would you like to conduct? Many interesting questions remain to be examined by future generations of developmental scientists – maybe you will make one of the next big discoveries!

Try It

GLOSSARY

attrition: reduction in the number of research participants as some drop out over time

case study: exploring a single case or situation in great detail. Information may be gathered with the use of observation, interviews, testing, or other methods to uncover as much as possible about a person or situation

content analysis: involves looking at media such as old texts, pictures, commercials, lyrics or other materials to explore patterns or themes in culture

control group: a comparison group that is equivalent to the experimental group, but is not given the independent variable

correlation: the relationship between two or more variables; when two variables are correlated, one variable changes as the other does

correlational research: research design with the goal of identifying patterns of relationships, but not cause and effect

correlation coefficient: number from -1 to +1, indicating the strength and direction of the relationship between variables, and usually represented by r

cross-sectional research: used to examine behavior in participants of different ages who are tested at the same point in time; may confound age and cohort differences

dependent variable: the outcome or variable that is supposedly affected by the independent variable

descriptive studies: research focused on describing an occurrence

double-blind: a research design in which neither the participants nor the researchers know whether an individual is assigned to the experimental group or the control group

evaluation research: research designed to assess the effectiveness of policies or programs

experimental group: the group of participants in an experiment who receive the independent variable

experiments: designed to test hypotheses in a controlled setting in efforts to explain how certain factors or events produce outcomes; the only research method that measures cause and effect relationships between variables

experimental research: research that involves randomly assigning people to different conditions and using hypothesis testing to make inferences about how these conditions affect behavior; the only method that measures cause and effect between variables

explanatory studies: research that tries to answer the question “why”

Hawthorne effect: individuals tend to change their behavior when they know they are being watched

hypotheses: specific statements or predictions about the relationship between variables

independent variable: something that is manipulated or introduced by the researcher to the experimental group; treatment or intervention

informed consent: a process of informing a research participant what to expect during a study, any risks involved, and the implications of the research, and then obtaining the person’s agreement to participate

Institutional Review Boards (IRBs): a panel of experts who review research proposals for any research to be conducted in association with the institution (for example, a university)

longitudinal research: studying a group of people who may be of the same age and background (cohort), and measuring them repeatedly over a long period of time; may confound age and time of measurement effects

negative correlation: two variables change in different directions, with one becoming larger as the other becomes smaller; a negative correlation is not the same thing as no correlation

observational studies: also called naturalistic observation, involves watching and recording the actions of participants

operationalized: concepts transformed into variables that can be measured in research

positive correlation: two variables change in the same direction, both becoming either larger or smaller

qualitative research: theoretical ideas are “grounded” in the experiences of the participants, who answer open-ended questions

quantitative research: involves numerical data that are quantified using statistics to understand and report what has been studied

reliability: when something yields consistent results

research design: the strategy or blueprint for deciding how to collect and analyze information; dictates which methods are used and how

scatterplot: a plot or mathematical diagram consisting of data points that represent two variables

secondary content analysis: archival research, involves analyzing information that has already been collected or examining documents or media to uncover attitudes, practices or preferences

selective attrition: certain groups of individuals may tend to drop out more frequently resulting in the remaining participants longer being representative of the whole population

sequential research design: combines aspects of cross-sectional and longitudinal designs, but also adding new cohorts at different times of measurement; allows for analyses to consider effects of age, cohort, time of measurement, and socio-historical change

survey: asking a standard set of questions to a group of subjects

validity: when something yields accurate results

variables: factors that change in value